AlphaEvolve: A Step Towards Self-Evolving AI Agents

Google DeepMind's evolutionary coding agent uses Gemini to generate, test, and evolve code, breaking a 56-year-old math record and optimizing Google's own infrastructure.

Google DeepMind released AlphaEvolve, an evolutionary coding agent that orchestrates LLMs to generate, evaluate, and iteratively refine code. It has already broken a 56-year-old matrix multiplication record, recovered 0.7% of stranded compute in Google's data centers, and sped up Gemini training kernels by 23%. This post covers how it works, how it compares to its predecessor FunSearch, and what the results mean.

TL;DR AlphaEvolve pairs Gemini Flash (high volume) and Gemini Pro (high quality) inside an evolutionary loop: generate code variants, evaluate them against user-defined metrics, keep the best, repeat. It evolves entire files (not just single functions), supports any language, and uses orders of magnitude fewer LLM samples than FunSearch. Key results: first improvement over Strassen's algorithm for 4x4 complex matrix multiplication in 56 years, 0.7% compute recovery in Google's Borg scheduler, and 23% kernel speedup for Gemini TPU training.

AlphaEvolve hero image (Source: Google DeepMind)

AlphaEvolve hero image (Source: Google DeepMind)

The problem it solves

LLMs are strong at generating hypotheses and designing experiments, but turning that into entirely new, verified discoveries has been harder. The bottleneck is evaluation: without a reliable way to score candidates automatically, the search space is too large to navigate.

AlphaEvolve targets problems where solutions can be automatically tested. Users provide a scoring function, and the system handles the rest: generating candidates, evaluating them, and evolving the best ones forward. Problems that require manual experimentation or lack a machine-gradeable metric remain outside its scope.

How it works

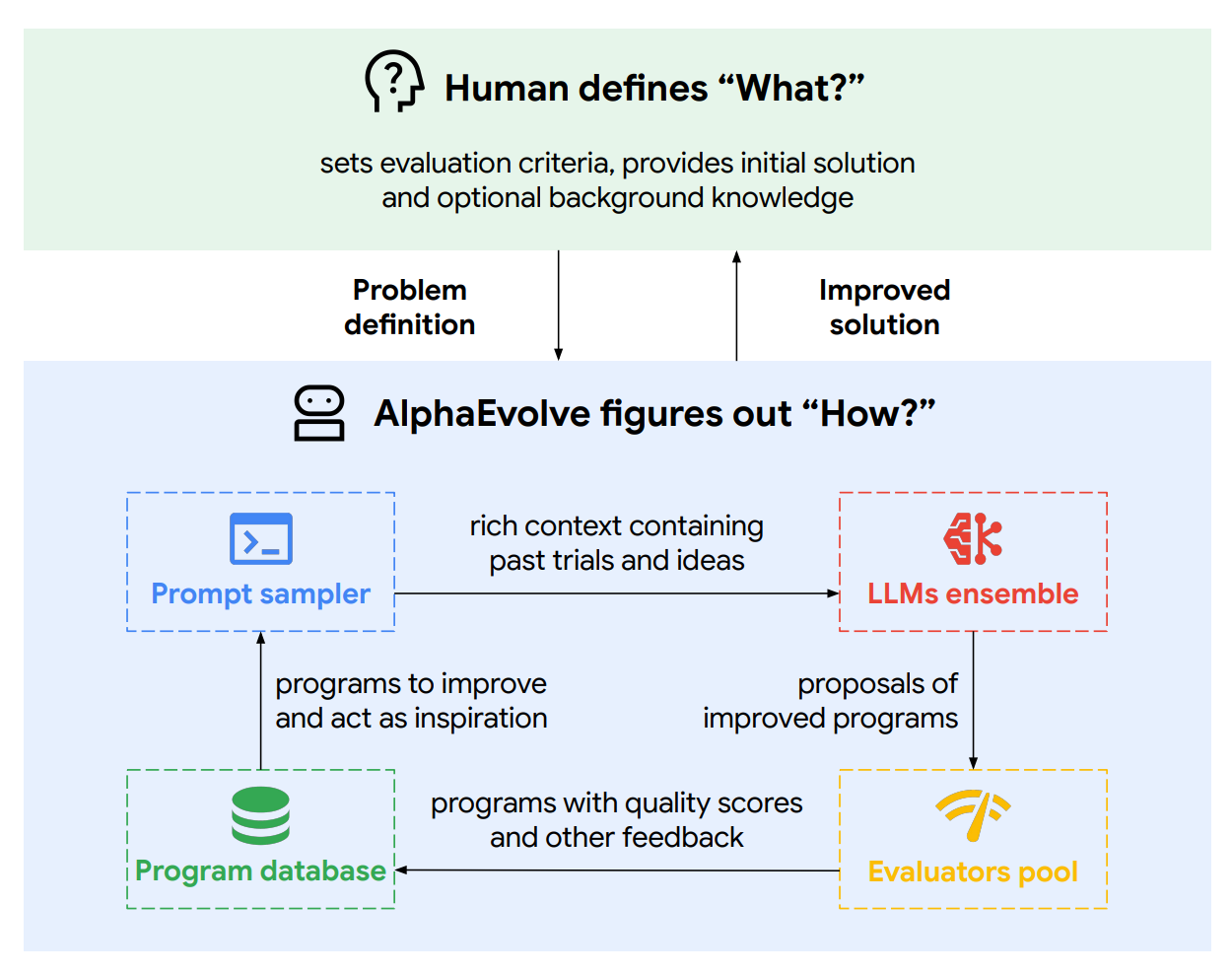

AlphaEvolve architecture (Source: Google DeepMind)

AlphaEvolve architecture (Source: Google DeepMind)

AlphaEvolve runs an autonomous loop of variation, evaluation, and selection.

The evolutionary loop

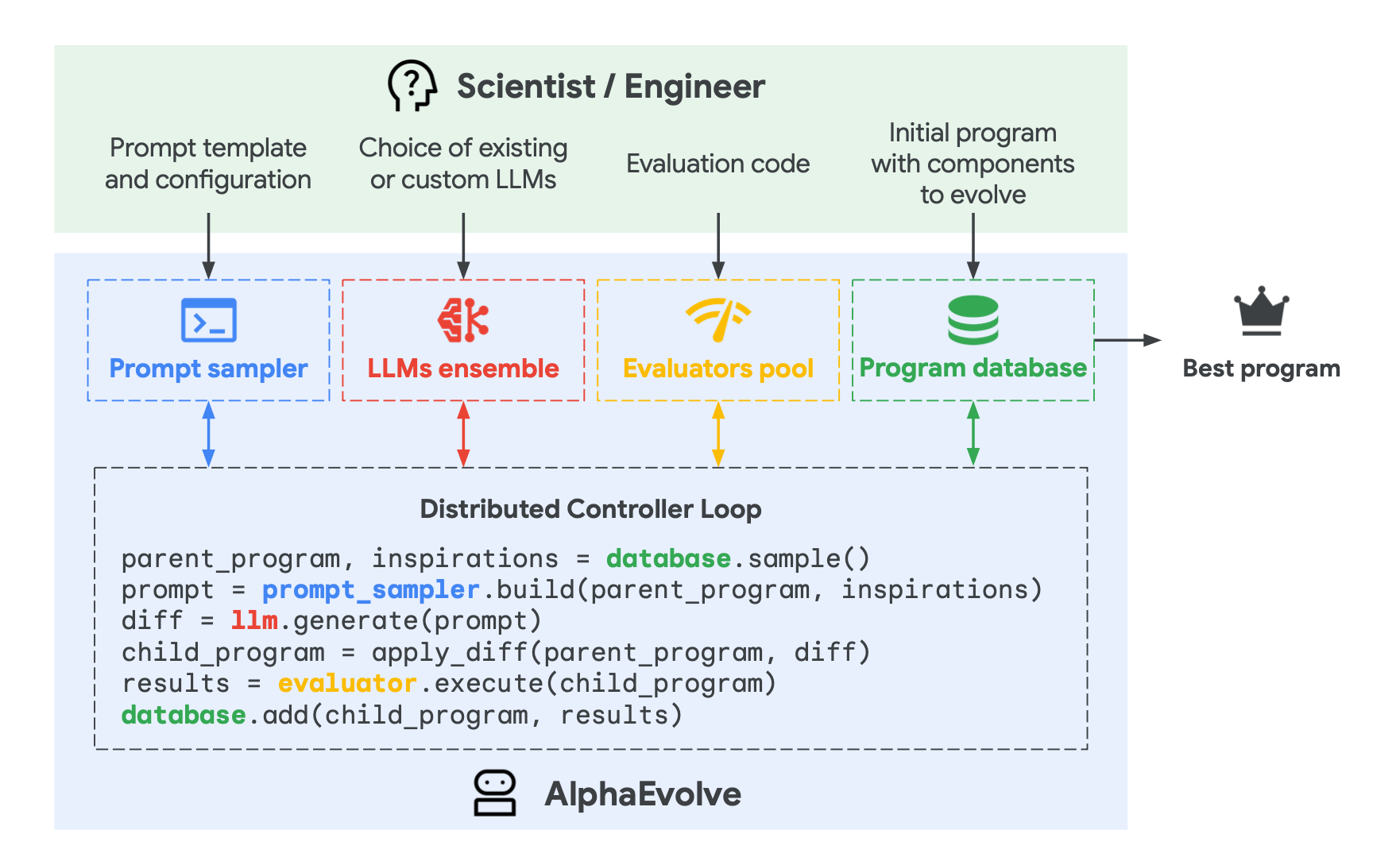

AlphaEvolve discovery process (Source: Google DeepMind)

AlphaEvolve discovery process (Source: Google DeepMind)

The process starts with an initial program (even a rough draft works). AlphaEvolve generates a pool of variations using two models in tandem: Gemini Flash for high-volume, low-latency candidate generation, and Gemini Pro for slower, higher-quality mutations capable of larger structural changes.

Each candidate is immediately evaluated against user-defined metrics. Weak candidates are filtered out. Stronger ones seed the next generation.

The evaluation cascade

Several mechanisms make this effective:

-

Program database. AlphaEvolve maintains a database of solutions with their scores, balancing exploration (diverse new ideas) and exploitation (refining the best existing solutions) to avoid local optima.

-

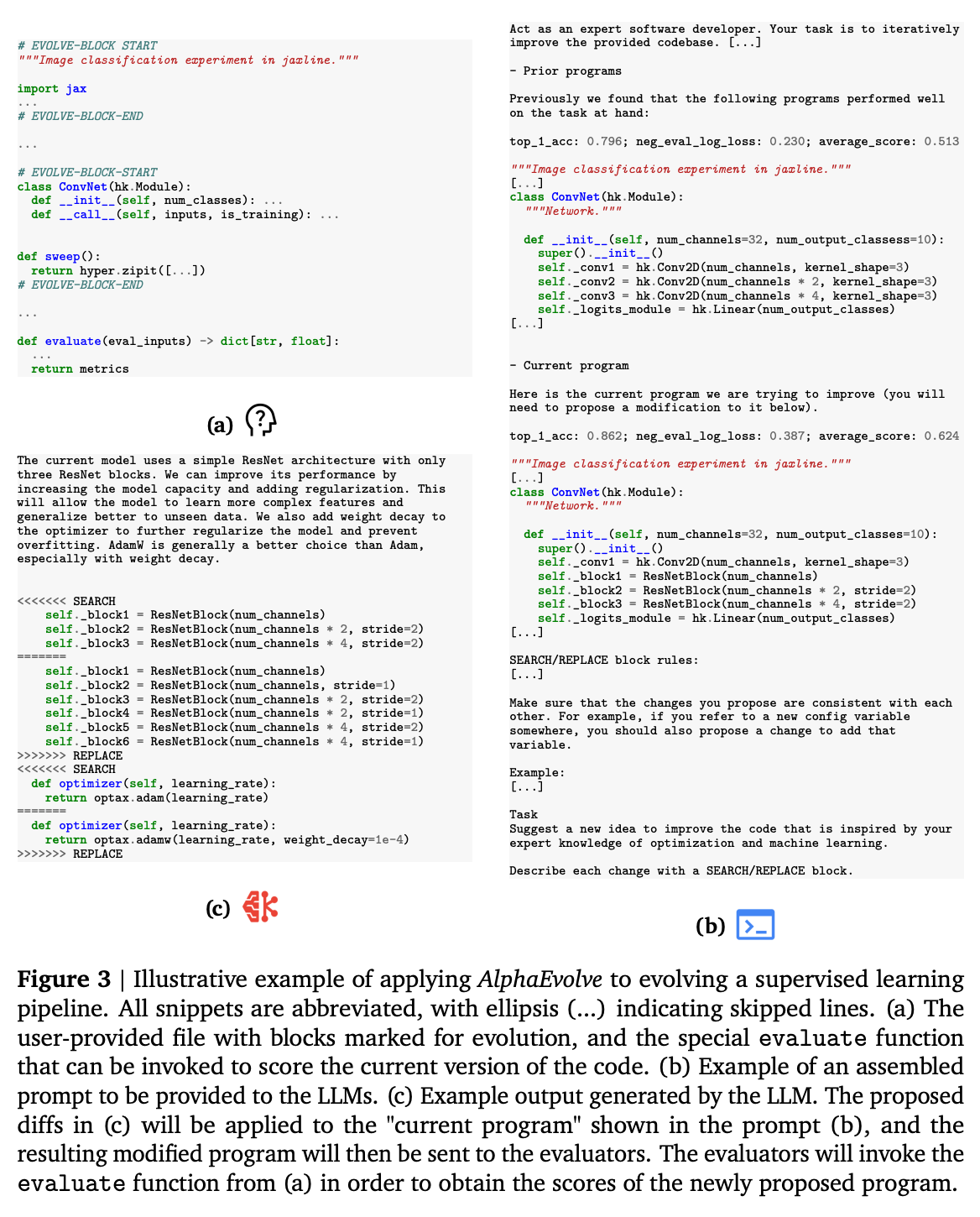

LLM-based code generation. The system requests changes in diff format for precise, targeted updates. For short segments or complete overhauls, it generates entire code blocks from scratch.

LLM code generation in AlphaEvolve (Source: Google DeepMind)

LLM code generation in AlphaEvolve (Source: Google DeepMind)

- Progressive difficulty. New solutions are tested on easier cases first. Only candidates that pass proceed to harder evaluations. This filters out faulty programs early, saving compute.

AlphaEvolve vs. FunSearch

AlphaEvolve is a substantial upgrade over its predecessor, FunSearch:

- Evolution scope: FunSearch evolves a single function. AlphaEvolve evolves entire files with multiple functions.

- Code scale: FunSearch handles 10-20 lines. AlphaEvolve handles hundreds.

- Language support: FunSearch is Python-only. AlphaEvolve supports any language.

- Evaluation time: FunSearch runs 20 minutes on 1 CPU. AlphaEvolve evaluates for hours, in parallel, on accelerators.

- LLM efficiency: FunSearch requires millions of LLM samples. AlphaEvolve uses orders of magnitude fewer tokens.

- Model scaling: FunSearch saw no benefit from larger LLMs. AlphaEvolve benefits directly from stronger models.

- Optimization: FunSearch optimizes a single metric. AlphaEvolve evolves multiple metrics simultaneously.

Three breakthroughs

AlphaEvolve infrastructure results (Source: Google DeepMind)

AlphaEvolve infrastructure results (Source: Google DeepMind)

DeepMind reports three production-validated results:

-

Matrix multiplication. AlphaEvolve discovered a rank-48 algorithm for multiplying two 4x4 complex-valued matrices. This is the first improvement on the rank for multiplying two 4x4 complex matrices since Strassen's 1969 work, a provably correct mathematical discovery, not an incremental optimization.

-

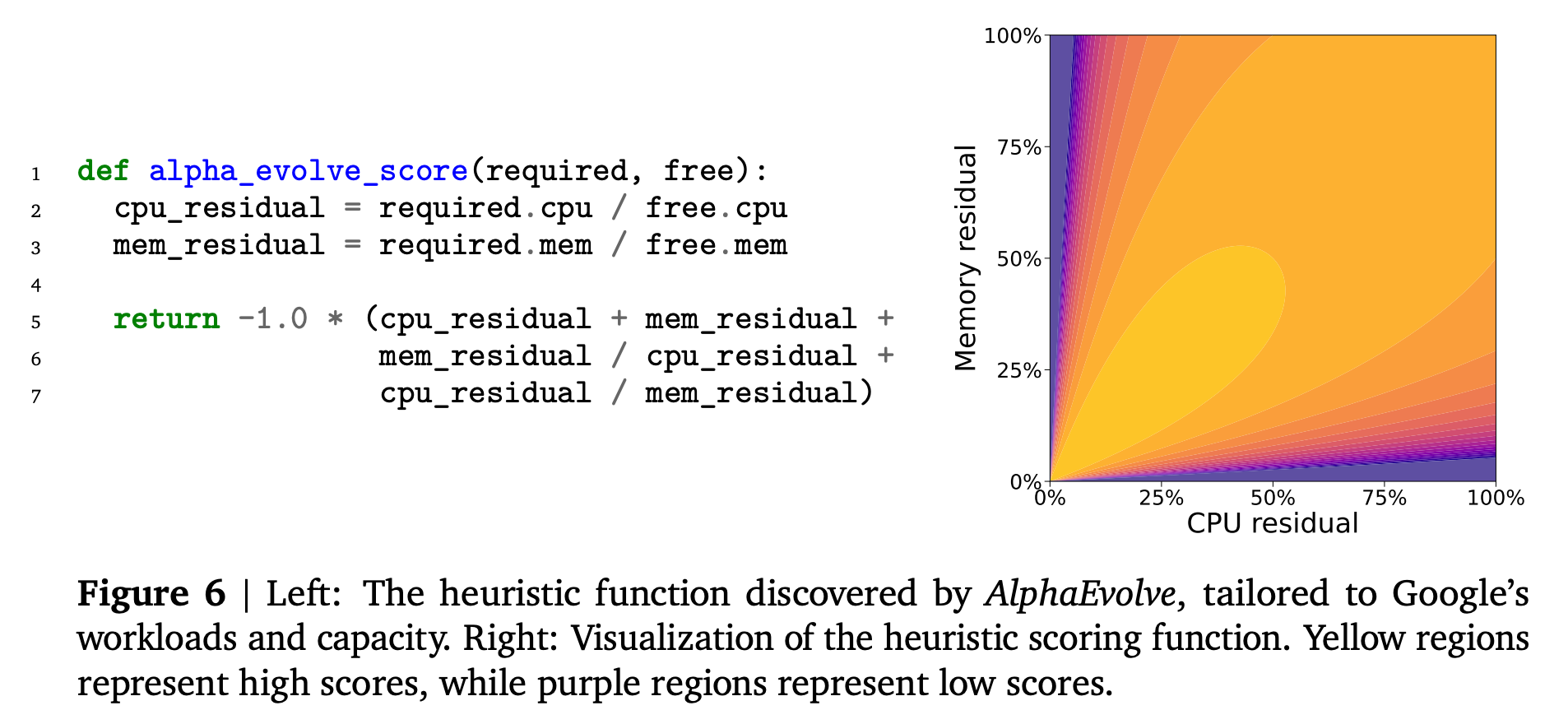

Data center scheduling. Google's Borg scheduler orchestrates compute jobs across its fleet. AlphaEvolve discovered a heuristic that recovers 0.7% of previously stranded CPU/RAM resources in production.

Borg scheduler optimization (Source: Google DeepMind)

Borg scheduler optimization (Source: Google DeepMind)

The absolute scale is what makes the percentage notable.

By DeepMind's own estimate, 0.7% of Google's compute fleet translates to enough headroom to run hundreds of additional large-scale AI models, or the energy equivalent of powering thousands of homes for a year.

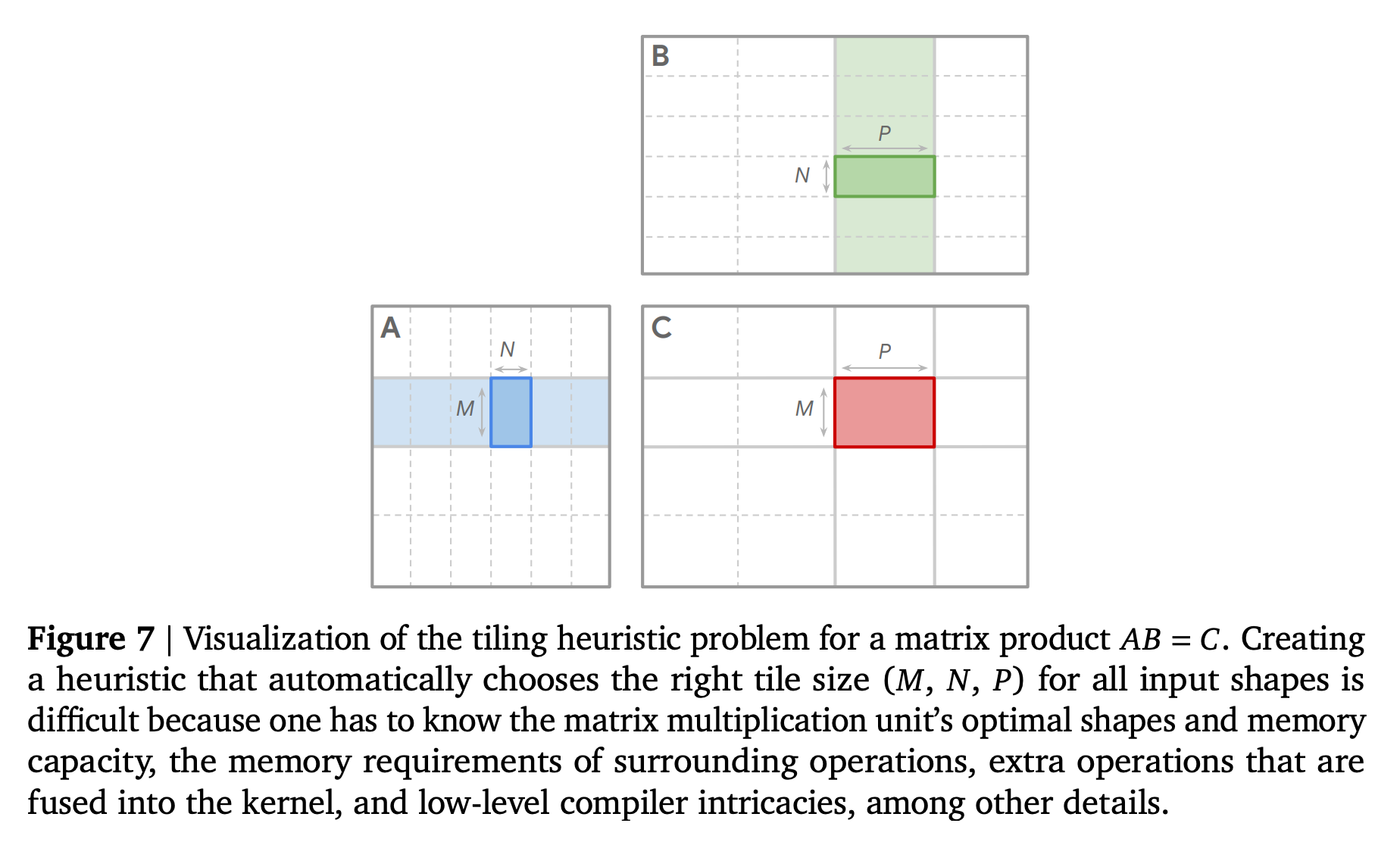

- Gemini training kernels. AlphaEvolve optimized the tiling strategy for matrix multiplication within Gemini's TPU kernels, yielding a 23% kernel speedup and roughly 1% end-to-end training time reduction.

Kernel speedup results (Source: Google DeepMind)

Kernel speedup results (Source: Google DeepMind)

Why it matters

AlphaEvolve demonstrates that LLM-driven evolutionary search can produce verified discoveries in mathematics and meaningful optimizations in production infrastructure. The Gemini kernel result is particularly notable: an AI system improving the efficiency of its own training pipeline.

The constraint is the evaluation function. AlphaEvolve works where solutions can be scored automatically. Problems requiring manual testing, subjective judgment, or physical experimentation remain out of scope. The boundary of what this approach can reach depends directly on how well the scoring function can be defined and automated.

The system also shows clear benefits from model scaling, unlike FunSearch. As frontier models improve, the quality of candidates AlphaEvolve generates should improve correspondingly, widening the range of problems it can tackle.